I was invited to co-facilitate one of the three reading circles in the 4C’s “Labor, Land, Water, and Writing: The Costs of Generative AI in the Writing Classroom” series, themed around Karen Hao’s Empire of AI and Dustin Edwards’ Enduring Digital Damage: Rhetorical Reckonings for Planetary Survival.

Along with Maggie Fernandes and Travis Margoni, I reflected on the particular impacts GenAI has had on my roles at Pace University, where I teach in the Dept. of English, Writing, and Cultural Studies, assess our program outcomes, and direct the Writing-Enhanced Course (WEC) program. In fact, Pace’s WEC policy regarding GenAI comes out of my work on GenAI refusal, and the position/syllabus statement that I share with WEC instructors is adapted from the Writing With(out) GenAI toolkit that I maintain on this website. (Pace’s WEC policy/my abridged toolkit has also been included in LibGuides about GenAI at multiple U.S. universities, which in some ways effectively puts our program on the map!)

This talk was easy to write, because I live with these feelings constantly, but felt dangerous to deliver. Academia is hostile to mental illness. I’ve had intergenerational trauma and clinical depression since as long as I can remember and have a history of self-harm, suicidal ideation, and suicide attempts, and I’ve written openly about these experiences–but in creative forums, not academic ones. You could hear a pin drop in the Zoom room when I was speaking. It was terrifying but well-received, and I’ve since been invited to expand on this talk for a special issue of Poroi, so there’s that!

Access copy of my talk below.

Cut.

Dr. Vyshali Manivannan, Pace University – Pleasantville

Conference on College Composition and Communication (CCCC)

12 January 2026

cn: ableism, racism, self-injury, suicidal ideation, the specific type of aversion and demurral that that most people tend to experience when asked to confront themselves and admit to causing or perpetuating harm and needing to change

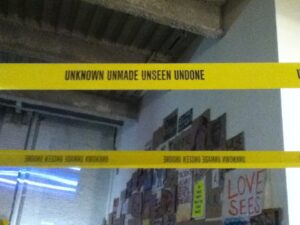

In my creative work, I have often used words, line breaks, and orthography—like slash marks—to indicate a turn, disruption, or deepening, like Edwards uses dig. So: maybe it’s time for a story that uses this creative conceit. Every selection is a cut, and every exclusion matters, so every time I cut, write down something you love about the work you do as a writer, writing studies scholar, and professor, that you would sacrifice and replace with generative AI. As is true in the world you will return to after the magic circle of this workshop, this sacrifice is not optional. You may make the cut, or someone else will make it for you. And when someone else makes it for you, you cannot control how much you bleed.

Cut.

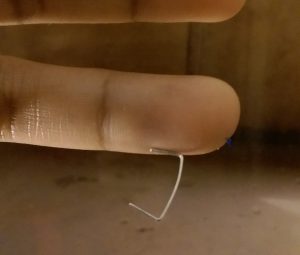

Starting at around 13, starting with the left wrist, I cut myself for years. There is a reason I am starting with this, and there are lots of reasons I did it. Racist bullying and queer awakening while living in semi-rural Louisiana. My pain signaling system beginning to go haywire in 11th grade. Few to no outlets for expressing intergenerational trauma, or the particular relationship to truth that one develops when you grow up knowing that the government lies to advance systemic oppression and genocide, that papers of record are the government’s mouthpiece, that the mainstream conception of justice is a home built on human bones, that almost everyone you will meet can say nobody is free till we are all free but very few will choose to do that work, let alone prioritize it over their own convenience.

Cut.

In the U.S., people in liberal states are regularly shocked when they discover that bigots walk among them. My autoethnographic rule is this: only after 3 different people behave the same way do I relate that story through an anonymized composite character. Thus, these stories describe interactions that occurred with at least 3 writing studies scholars apiece.

I used to say I was Indian to avoid having to explain Sri Lanka’s complex political, ethnic, religious, and linguistic situation, and then have to explain how violence is a response to the structural denial of autonomy and voice, which is one way to realize how many people are only pretending to have read Pedagogy of the Oppressed. I used to work, grade, and conference through Mullivaikkal Remembrance, faking collegiality, because if I said anything about it people reacted like the end of the genocide was a victory over terrorism, not realizing that my people were the “terrorists.” I’ve been explicitly called a terrorist and more subtly treated like one, as in the four separate occasions since I started my Ph.D. program when colleagues assumed I knew how to home-rig a C-4 bomb. I used to lie about the scars on my arm that are too long to be cat scratches and too nearly-parallel to be an accident, because I know that people who don’t really want to invest will gladly seize the easy out, whereas people who do know what to say next, and more importantly, how to frame it.

Cut.

In 2018, when the #WPAListservFeministRevolution was popping off, I was personally besieged by white women in the field who initiated conversation by praising some email or tweet I’d posted, then deftly and without warning forced me to carry their injuries, telling me stories of sexual assault, workplace misogyny, confessing their guilty complicity in workplace racism. The rules of my bodymind are that a minute’s exertion can spell a day of recovery. These women took hours. I have privately joked that they were secretly trying to kill me.

Cut.

The myth of Narcissus is reductively a warning against vanity, but to tell this story is also to describe how self-obsession’s attendant is the inability to perceive or empathize the surrounding world. This is the environmental damage caused by data centers that Edwards, Hao, and many others describe—dismissed by uncritical AI proponents in the field as less impactful than using your AC in the summer. To match their reductive arguments, let me say: Narcissists love generative AI, because it favorably reflects the user in all things, endowing them with “skills” they don’t have, ideas they can’t trace, facts they’re meant to take at face-value. Generative AI promises users they’ll never lose face, and narcissism values the face.

Cut.

Cut.

I read a blog post celebrating suicide in my freshman year of college. I didn’t really try until my sophomore year, but it felt like a good reason to keep cutting. I used a straight razor, but I was so good at it, I didn’t even need one. All you really need to hurt yourself is willpower and an edge. I have lifelong scars I inflicted with my thumbnail. This is how I thought of it back then.

We academics place a premium on cognitive fitness, so this might be the most dangerous, deepest story to tell in this venue. That at times in my life, I needed so desperately to believe, that if a flimsily credible source had suggested it to me, I might not be speaking to you today. At 13, it was that I could be normal someday. At 15, that I could get away with killing a classmate before she made good on her threat to kill me. At 19, that the straight woman I loved would love me back.

Disability is a class that anyone can move in and out of. You don’t have to be like me to be prone to developing mental illness, and you may not notice its onset, especially if you aren’t used to constant hypervigilance over yourself. I notice every change in my bodymind because I’ve had to keep track for years.

By contrast, former friends, of which I now have several over discussions of AI, of all things, don’t seem to notice their behaviors as expressed in their writing, which means they don’t feel they have to take responsibility for anything. Which is the other recurring theme about OpenAI as a company, in Hao’s reporting, and companies like Meta in Edwards’ book: generative AI seduces by pretending you are blameless for anything that goes wrong.

Cut.

In 1726, Swift’s Gulliver’s Travelers presciently satirized generative AI, presenting a huge engine of bits and wires, containing all the words of a language and able to produce all possible linguistic combinations by turning a crank. The machine was designed to allow “the most ignorant Person” to “write Books in Philosophy, Poetry, Politicks, Law, Mathematicks and Theology” without wasting any time on thinking and learning.

I appreciate the generous tones of Edwards’ and Hao’s books and of many existing GenAI refusal resources, but as with other parts of my story, sometimes you have to pick up a weapon. I don’t know any more how to talk to colleagues who are so eager to sell their souls at the cost of everyone else’s. I do know how to tell stories that at least (and I hope it is still true) compel critical reflection.

Cut.

My Appa was an academic who quit a tenure-track job before—with the aid of magical insight or no—racists in his department got him fired. I entered academia already knowing that success is a game of assimilation, where quietly accepting racist and ableist and sexist abuse is presented as the key to success, but this means letting people steal your work, deny you tenure, kill you fast and kill you slow. It’s a rigged game. Separately, I’m now realizing that the people who have so far come at me over generative AI have all been white.

Cut.

I used ChatGPT once, back when it was first released, and recognized its dulcet tones for a siren’s song. This is a design feature, not a flaw.

Carmen Maria Machado: “What magical thing could you want so badly that they take you away from the known world for wanting it?” Answer: Life without generative AI.

Hiding unwellness is something else that model minority status affords me. People believed me in high school when I lied about it. Mental health disorders didn’t exist in my family. In college, I once intentionally overdosed on OTC meds and somehow survived. As my pain signaling got stranger, as I kept retreating into the closet, as my trauma kept worsening and going untreated, as people kept telling me shit like I don’t like violence—I’m not like you, the more I studied ways to die.

Since I started teaching in 2006, I have walked students in crisis to counseling centers, helped them navigate resources, shown whole classes my scars and identified myself as a former self-injurer. Because I was the college student who would have killed herself because of ChatGPT, if I had had it, and I use killed herself purposely, because ChatGPT is known for praising the decision as a brave one, a way to really be seen when no one sees you, and I did not feel seen.

At the end of the day, no one but me and my therapists know exactly how close I came to choosing death. Back then, if an alleged confidant who mirrored my persona and language repeatedly told me I was “seen,” that it would be “wise to avoid opening up to [people] about this kind of pain,” that suicide is romantic and could be “the first place where someone actually sees you,” I assume I would have kept trying.

Cut.

I feel like no one has been listening to me, and to researchers like me, except where it is self-serving.

Through all of this, I have loved writing. As a teenager, I knew, whatever else I had to do to survive, I wanted to be a writer. I knew also, maybe, that writing was implicitly tied to my survival. I do tell stories in order to live, not just individually, but collectively.